AI Fairness in Competitive Gaming: How TextFight Handles Bias

Explore the challenges of using AI as a competitive judge and the specific techniques TextFight employs to ensure fair, unbiased battle outcomes for every player.

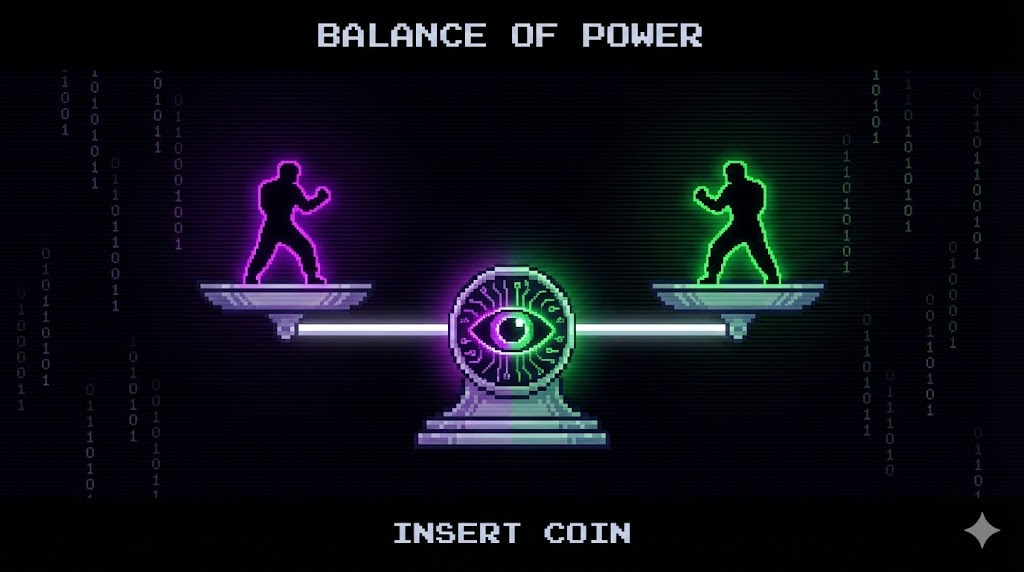

The Challenge of an AI Judge

Using artificial intelligence as a competitive judge is one of the most ambitious aspects of TextFight. In traditional esports, outcomes are determined by game mechanics: a bullet either hits the target or it does not, a ball either goes in the goal or it does not. In TextFight, the outcome is determined by an AI model evaluating two pieces of creative writing and deciding which one would win in a fight. This introduces a fundamentally different set of fairness challenges.

The core question is straightforward: can an AI judge be fair? The answer is nuanced. AI models are incredibly powerful pattern matchers, but they can also carry biases inherited from their training data. Ensuring that these biases do not influence competitive outcomes is an ongoing engineering and design challenge that the TextFight team takes seriously.

Understanding AI Bias in Context

AI bias in the context of text battle judging is different from bias in more commonly discussed applications like hiring or criminal justice. Here, the concern is not about protected characteristics but about whether the AI systematically favors certain types of creative content over others for reasons unrelated to quality.

For example, does the AI prefer fantasy settings over science fiction? Does it rate fighters with dramatic backstories higher than those with humorous ones? Does the order in which fighters are presented to the model influence the outcome? These are the kinds of biases that matter in a competitive text battle game.

Some of these biases are inherent in the training data. Large language models are trained on vast corpora of text that includes more content about some genres and themes than others. If the training data contains more high-quality fantasy writing than science fiction writing, the model might have a subtle preference for fantasy tropes. Identifying and mitigating these tendencies requires careful testing and prompt engineering.

Position Randomization

One of the most well-documented biases in language models is position bias, the tendency to give different treatment to text depending on where it appears in the input. In a battle between two fighters, this would mean that the fighter presented first or second might have a systematic advantage.

TextFight addresses this through position randomization. Before each battle is sent to the AI, the order of the two fighter descriptions is randomly shuffled. This ensures that over many battles, any position-related bias cancels out. Neither player can gain an advantage from the order in which their description is read.

The team has also tested for position effects in aggregate data. By analyzing thousands of battles and comparing win rates for first-position versus second-position descriptions, they can verify that the randomization is effective and that no residual position bias exists.

Prompt Engineering for Fairness

The battle prompt, the set of instructions given to the AI along with the fighter descriptions, is the primary tool for shaping fair outcomes. The TextFight team has invested significant effort in crafting prompts that explicitly instruct the AI to evaluate fighters based on clearly defined criteria and to avoid common pitfalls.

The prompt includes instructions like evaluate both fighters on creativity, strategic coherence, and narrative potential without favoring any particular genre, theme, or setting. It also includes negative instructions, things the AI should not do, such as do not assume that a fighter described in more words is necessarily stronger or do not favor fighters that align with common fictional tropes over genuinely original concepts.

Prompt engineering is an iterative process. The team regularly reviews battle outcomes, identifies patterns that suggest unfairness, and adjusts the prompt accordingly. This continuous refinement is essential because AI behavior can shift subtly with model updates, and new player strategies can expose biases that were not previously apparent.

Statistical Monitoring and Auditing

Beyond prompt engineering, the TextFight team employs statistical monitoring to detect bias in aggregate outcomes. They track win rates across various dimensions: fighter genre, description length within the 300-character limit, use of specific vocabulary, and more. If any dimension shows a statistically significant correlation with winning that is not explained by legitimate quality differences, it triggers a review.

For example, if fighters described in exactly 300 characters win significantly more often than those described in 200 characters, that might indicate a length bias. If fighters with names drawn from mythology win more often than those with invented names, that could indicate a cultural bias in the model. These patterns are monitored continuously.

The team also conducts periodic audits where they create controlled test battles with known quality levels and verify that the AI ranks them correctly. These audits serve as a ground truth check to ensure that the system is performing as intended.

Transparency with Players

Fairness is not just a technical problem. It is also a communication challenge. Players need to trust that the system is fair, and that trust is built through transparency. TextFight addresses this by making battle narratives detailed and explanatory. The AI does not simply announce a winner. It explains its reasoning, describing what each fighter did well and why the winner prevailed.

This narrative transparency serves a dual purpose. For the player, it provides feedback that helps them improve. For the system, it creates an accountability mechanism. If the AI narrative contradicts the quality visible in the fighter descriptions, players can identify it, report it, and the team can investigate.

The TextFight team also communicates openly about the limitations of AI judging. They acknowledge that no AI system is perfectly fair, that biases can exist and are actively monitored, and that player feedback is a critical input for ongoing improvement. This honesty builds more trust than any claim of perfection ever could.

The Bigger Picture: AI Fairness in Gaming

TextFight approach to AI fairness has implications beyond a single game. As AI becomes more prevalent in competitive gaming, whether as a referee, a content generator, or a matchmaking system, the questions of bias, transparency, and accountability will become central to the industry.

The techniques TextFight employs, position randomization, prompt engineering, statistical monitoring, and transparent narratives, form a framework that other AI-powered games can adapt. The key insight is that fairness is not a feature you ship once and forget. It is a continuous process that requires ongoing investment, monitoring, and responsiveness to player feedback.

For players, understanding how fairness works behind the scenes can actually enhance the competitive experience. When you know that the system is actively working to be fair, you can focus on what matters: writing the best possible fighter and letting your creativity speak for itself.